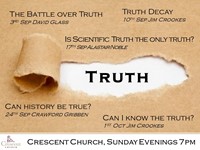

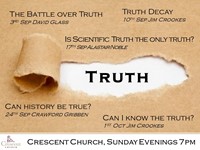

Is Scientific Truth The Only Truth?

Speaker: Alastair Noble

- Sunday, September 17, 2017

- Evening

Christians claim that the Gospel is true. Underlying that claim is the concept of truth as absolute, objective, and universal. However, our society sees truth as a product of culture, psychology, and power. “Truth is what your peers let you get away with saying” says the philosopher Richard Rorty. In such a relativistic culture, where something can be true for me but not for you, how can the case for Christianity be made? What exactly did our Lord mean when He claimed to be “the Truth”?

Speaker: Alastair Noble